Supplementary Material for

Multi-Target Machine Translation with Multi-Synchronous Context-free Grammars

Graham Neubig, Philip Arthur, Kevin Duh

Proceedings of NAACL 2015 (PDF)

This is the supplementary material for the paper "Multi-Target Machine Translation with Multi-Synchronous Context-free Grammars" presented at NAACL 2015.

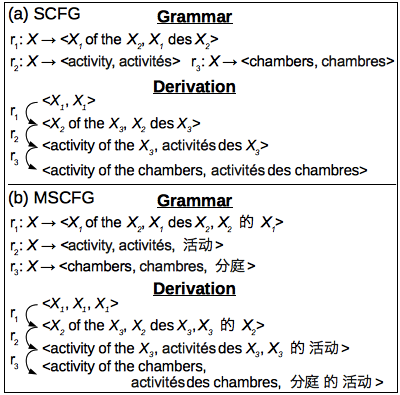

The main contribution of the paper is that we showed that when translating from language A to language B, we can simultaneously translate to language C, and increase the accuracy of our translations into language B. This is not so intuitive, so how does it work? The figure on the right shows an example. If we asked an English speaker which of the three translations in the figure is correct, if they just looked at the source (Arabic) and the target (Chinese), they could probably not choose the correct answer. However, if we also showed them the English translation, they could probably pick the third answer as the best one. In a way, this is because the English speaker has a strong English "language model" in their brain, which allows them to pick the correct answer.

This work incorporates this intuition into machine translation, by generating translations in multiple languages, and using a strong language model of a second target language to help improve translation accuracy into the first target language. The way we do this is by introducing a new framework of multi-synchronous context-free grammars (MSCFG). An example of how multi-synchronous context-free grammars is on the right. Compared to traditional synchronous context-free grammars, which are generative grammars over two languages, multi-synchronous context-free grammars generate any number of languages. In the paper, we describe the detail of learning these grammars from data, and how to perform translation and search.

Results on a number of languages show that in some cases, this allows us to obtain significant improvements of translation accuracy, on the scale of 0.8-1.5 BLEU points.

Software

- Training MSCFGs can be performed with scripts for this paper released on Github.

- Decoding using MSCFGs is implemented in the Travatar toolkit.

- Data processing and experiment scripts can be found here here.

If you have any problems using the scripts, please feel free to contact neubig (@is.naist.jp)!